Green Tests Are Evidence, Not Approval: Why Multi-Agent Engineering Needs ACS

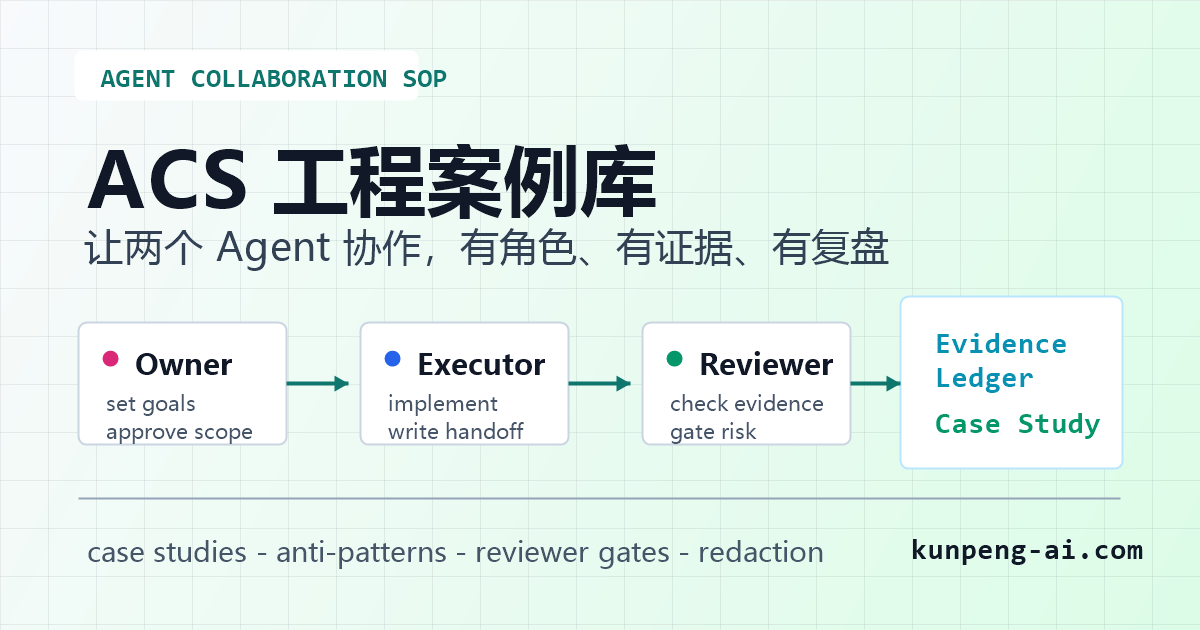

ACS, short for Agent Collaboration SOP, is a vendor-neutral workflow for teams that use multiple AI coding agents. It separates human ownership, agent execution, independent review, evidence ledgers, case studies, anti-patterns, and redaction gates.

Main answer

ACS is not about making two agents talk more. It is about turning Owner decisions, Executor delivery, Reviewer checks, and evidence records into a repeatable engineering workflow.

Who should read this

Teams using Codex, Claude Code, OpenClaw, Hermes, or other coding agents for real repositories, PRs, deployments, upstream work, or public technical content.

Key check

The public ACS project includes README guidance, message routing rules, file-first handoffs, evidence ledgers, reviewer reports, redacted case studies, anti-patterns, and redaction rules.

Next step

Start with one real project phase: let the Executor write a handoff, let the Reviewer check evidence and scope, then let the Owner decide from files instead of chat memory.

What You'll Learn

- + What problem ACS solves in multi-agent engineering

- + Why the Executor must not approve its own work

- + Why green tests are evidence, not approval

- + How Evidence Ledger and Reviewer Report files make collaboration durable

- + Why redacted case studies and anti-patterns are worth maintaining

The Hard Part Is Not Always Writing Code

Many teams are now using more than one AI coding agent.

One agent implements a change. Another agent reviews it. A human owner makes the final call. On paper, this sounds reasonable.

In practice, it often gets messy.

The executor says the task is done because the tests passed. The reviewer repeats the same command and calls it verified. The owner receives a confident summary in chat, but the actual evidence is scattered across terminal output, screenshots, local files, and memory from the previous conversation.

That is not a reliable engineering workflow.

The problem is not that AI agents cannot write code. The problem is that a team needs a collaboration chain it can inspect later.

This is why we started maintaining Agent Collaboration SOP, or ACS.

ACS is a vendor-neutral, file-first workflow for multi-agent engineering collaboration. It is designed for teams using Codex, Claude Code, OpenClaw, Hermes, or similar coding agents in real projects.

What Goes Wrong Without a Shared Process

The common failure pattern is simple:

- the Executor changes code, runs tests, then approves its own work;

- the Reviewer checks only the green test result;

- the Owner makes a decision from chat instead of a durable record;

- the handoff says one thing, but the design document or repository state says another;

- screenshots are missing for UI, deployment, or upstream evidence;

- public materials accidentally include local paths, private repository names, customer context, tokens, or internal notes.

These are not model-intelligence problems. They are process and evidence problems.

If the work only lives in chat, the team cannot reliably replay what happened. If the evidence only lives in a terminal window, the next agent cannot use it. If the reviewer only checks whether tests are green, the actual release risk may stay invisible.

ACS tries to make that chain inspectable.

ACS In One Sentence

ACS stands for Agent Collaboration SOP.

It defines a practical collaboration model with three roles:

| Role | Responsibility |

|---|---|

| Owner | Sets goals, scope, business constraints, release decisions, upstream PR boundaries, and final approval. |

| Executor Agent | Designs, implements, tests, records evidence, and writes the handoff. |

| Reviewer Agent | Independently checks goals, scope, architecture, test coverage, screenshots, evidence quality, redaction, and release risk. |

The key rule is:

The Executor does not approve itself.

That rule sounds obvious in human engineering teams. It becomes even more important with agents.

An agent can follow its own assumptions very confidently. It may truly run tests. It may truly fix part of the issue. But that still does not prove the work is inside scope, aligned with the product goal, safe to publish, or ready for an upstream maintainer to review.

That is why ACS separates execution from approval.

Green Tests Are Evidence, Not Approval

The line we repeat inside ACS is:

Green tests are evidence, not approval.

Tests matter. A failing test should block many changes. But a passing test run only proves that a specific command passed in a specific environment.

It does not prove:

- the change stayed within the approved scope;

- the UI was actually inspected;

- screenshots exist for visual or browser-based behavior;

- docs, handoff, and implementation are aligned;

- public content has been redacted;

- the release is safe;

- an upstream PR is understandable for maintainers;

- no unrelated local changes were accidentally included.

This is where a Reviewer Agent becomes useful. The reviewer should not just rerun the same command and declare victory. It should inspect the evidence chain.

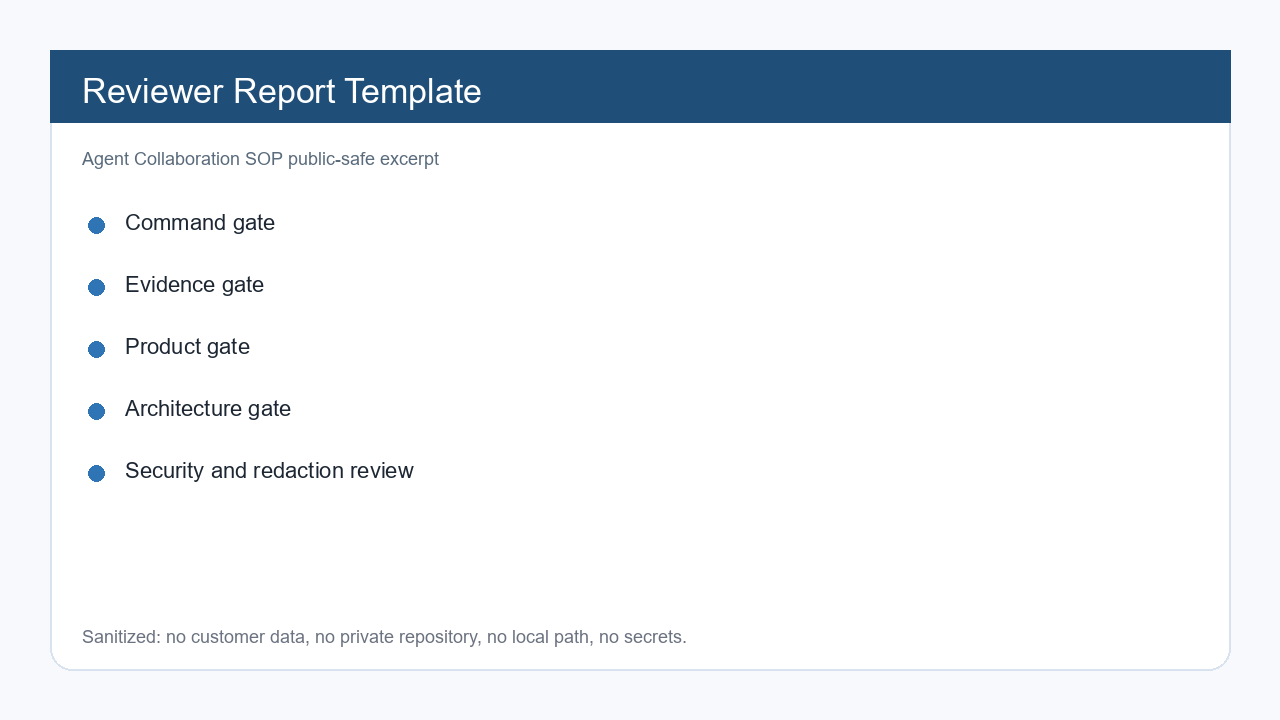

What A Reviewer Should Check

In ACS, a reviewer report should answer more than “did tests pass?”

A useful review checks:

- the stated goal and current implementation still match;

- the diff only touches the intended files;

- the build and test commands were actually run;

- screenshots or browser checks exist when UI behavior is involved;

- local paths, secrets, customer data, and private repository details are not exposed;

- handoff files and design files do not drift apart;

- the remaining risk is written down;

- the Owner has enough evidence to decide.

This is different from traditional code review, and it is also different from prompt refinement.

The goal is not to make an agent “sound more careful.” The goal is to leave behind files another person or agent can inspect.

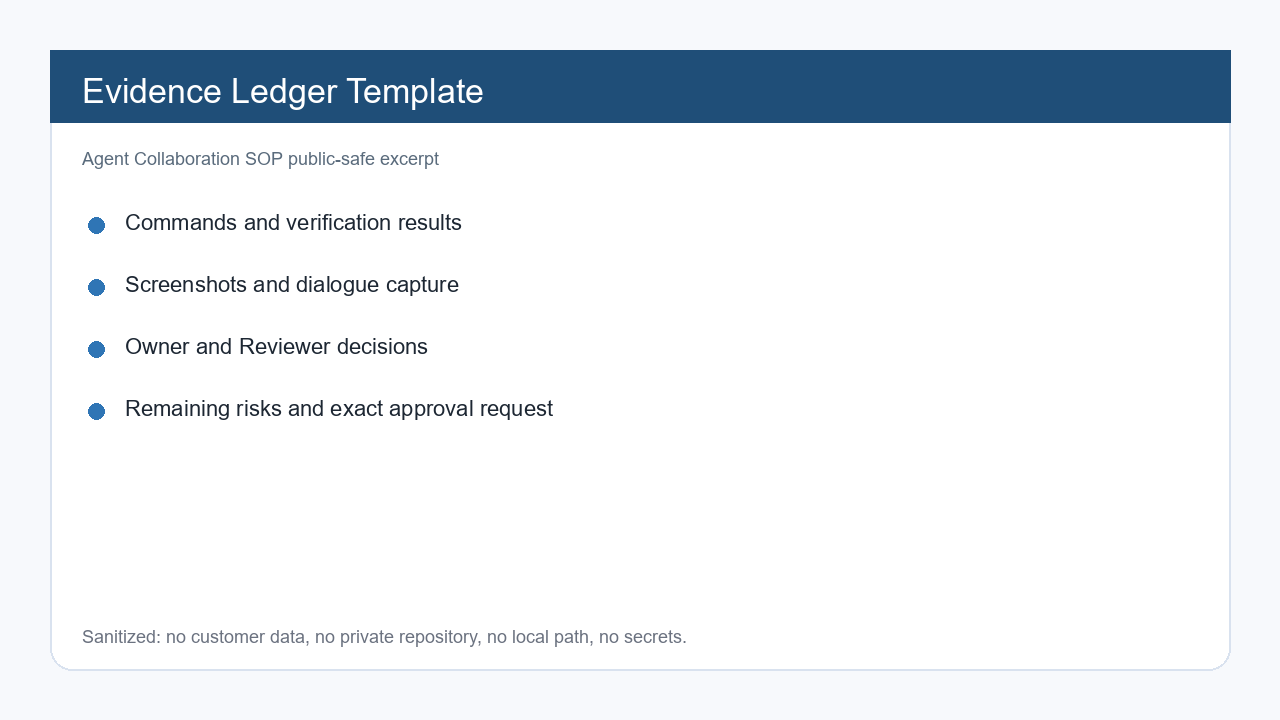

File-First Evidence

Chat is useful for coordination. It is a poor long-term engineering record.

ACS uses a file-first approach:

- Executor handoff;

- Reviewer report;

- Evidence ledger;

- Owner consensus report;

- case study;

- anti-pattern review.

The handoff should not be a long chat paste. It should point to files, commands, screenshots, decisions, and remaining risk.

The evidence ledger should make the work replayable:

- What was the goal?

- Which files changed?

- Which commands were run?

- What was the result?

- Which screenshots prove UI or external state?

- What risk remains?

- Which rule should be reused next time?

This is where ACS becomes more than a workflow document. It becomes team memory.

Why ACS Maintains Case Studies

ACS keeps two kinds of long-term assets:

case-studies/: redacted examples of collaboration loops that worked or surfaced a useful risk;anti-patterns/: recurring failure modes that look harmless until they cause rework.

The point is not to collect stories for their own sake. The point is to turn one hard-earned lesson into a reusable pattern.

Here are examples of the kind of cases ACS is designed to preserve.

Case 1: Green Tests But Hidden Diff

The Executor reported that tests passed and that no product code had changed.

The Reviewer did not stop at the test result. It checked both staged and unstaged diff and found that repository state did not match the handoff.

The reusable rule was simple: baseline closure requires test evidence, repository-state evidence, and scope evidence.

Case 2: Design Review Before Coding

The Executor prepared a design package with BDD, file plan, screenshots, and validation commands.

The Reviewer caught several issues before coding started:

- a new test would not be covered by the default command;

- the target page was not actually blank;

- product wording changes were hidden inside an implementation task;

- the handoff said an old command was removed, but the design document still kept it.

This is exactly the kind of issue that is cheap to fix before implementation and expensive to fix after.

Case 3: Integration Contract Before Implementation

A platform needed to integrate with another agent system. The Owner required staging, mock, and dry-run first.

The contract said unknown versions should fail closed, but the request body had no contractVersion field.

That mismatch matters. A safety rule that cannot be verified is not yet a real safety rule.

Case 4: Human QA Feedback Becomes A Prototype Fix

A human reviewer found that a prototype interaction looked fine in the happy path but failed under real use.

Instead of treating that as a chat comment, ACS pushes the feedback into evidence:

- what was observed;

- what screenshot proves it;

- what changed;

- what command or manual check verified it;

- what rule should apply next time.

This prevents the same UI issue from being rediscovered by the next agent.

Anti-Patterns Matter Too

Successful cases are useful. Anti-patterns are often more useful.

ACS tracks failure modes such as:

- the agent approving its own work;

- green tests treated as final approval;

- evidence kept only in chat;

- handoff and design documents drifting apart;

- product-language changes disguised as implementation details;

- UI review without screenshots;

- unsafe public output.

When these anti-patterns are written down, agents can check against them before they repeat the same mistake.

Redaction Is Part Of The Workflow

ACS is meant to support public learning, but public case studies must be safe.

Keep:

- the goal;

- what the Executor did;

- what the Reviewer found;

- what the Owner decided;

- the risk that was blocked;

- the reusable rule.

Remove:

- customer names;

- private repository URLs;

- local absolute paths;

- API keys, tokens, cookies, and webhooks;

- private chat logs;

- unpublished business information.

This is important because the best engineering lessons often come from real projects, but real projects contain information that should not leave the team.

ACS tries to preserve the lesson without leaking the context.

Where ACS Fits

ACS is useful when an AI agent workflow touches real engineering assets:

- production code;

- customer-visible pages;

- deployment flows;

- upstream PRs;

- technical articles;

- public screenshots;

- shared internal knowledge;

- multi-agent handoffs.

It is probably too heavy for a one-off toy snippet.

But once a task involves repository state, release risk, public output, or multiple agents, the cost of missing evidence goes up quickly.

That is the boundary where ACS becomes practical.

Contributing Redacted Cases

We are maintaining ACS as an open engineering practice project:

https://github.com/kunpeng-ai-lab/agent-collaboration-sop

The most useful contributions are not abstract opinions. They are redacted cases:

- what went wrong;

- what evidence caught it;

- what the reviewer checked;

- what the owner decided;

- what rule should be reused next time.

If you are running multi-agent engineering workflows, especially with Codex, Claude Code, OpenClaw, Hermes, or similar tools, this is the kind of operational knowledge worth preserving.

The Chinese main article and project context are here:

https://kunpeng-ai.com/blog/agent-collaboration-sop-acs-case-library/

ACS is not trying to make agents replace engineering judgment.

It is trying to make agent-assisted engineering reviewable.

Key Takeaways

- - A passing test run is useful evidence, but it does not prove the work is ready to ship.

- - Chat logs are not durable engineering records. Decisions and verification should land in files.

- - A Reviewer Agent should check goal alignment, scope, architecture, tests, screenshots, evidence, redaction, and release risk.

- - ACS turns one successful collaboration loop into reusable team memory.

Need another practical guide?

Search for related tools, error messages, setup guides, and engineering notes across the site.

FAQ

Is ACS tied to one AI coding agent?

No. ACS is vendor-neutral. It can be used with Codex, Claude Code, OpenClaw, Hermes, or any agent that can read files, edit code, run commands, write handoffs, and record evidence.

Is ACS too heavy for small tasks?

For a tiny code snippet, yes. For a real repository, deployment, PR, client-visible artifact, or public post, the evidence and review gates usually save more time than they cost.

Do public ACS case studies need to expose real projects?

No. Public case studies should be redacted. Keep the decision path, evidence pattern, blocked risk, and reusable rule. Remove customer names, private repositories, local paths, secrets, private chat logs, and unpublished business information.